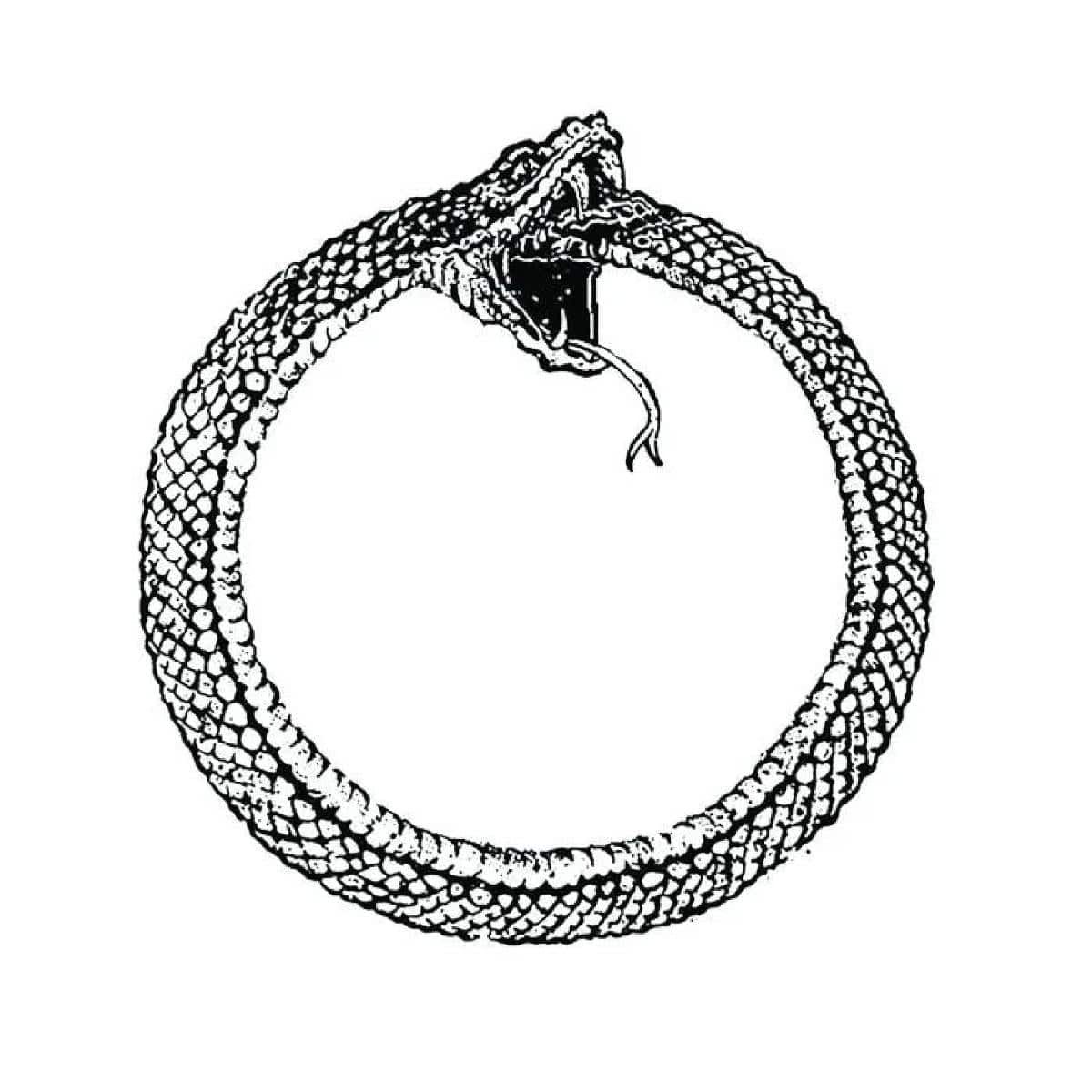

The Ouroboros Problem: AI is starting to eat it's own tail

Diminishing returns are already here

Let’s cut right to the chase - AI companies are lying to us.

Now, don’t get me wrong, their products are useful. I use AI everyday, personally and professionally. But that does not mean that I buy the vast, endless ocean of bullshit PR spin the CEOs of these companies are trying to push down our throats.

LLMs, the entire premise of Generative AI - in fact, is based on AI’s ability to imitate… not create.

The statement these companies need to sell you on, what they’ve conned investors into believing, and what they must double, triple, and quadruple down on is that this is not the case. As I said before, they are lying - full stop.

The way that they continue to sell this lie is by scaling these models out - making them bigger and bigger - using more and more data - adding in specific capabilities that may be useful, but distract from the fact that these models are never going to achieve “AGI”. By focusing on product level improvements on the front end, and scaling at any cost on the backend, the belief is that the models will continue increasing in speed and advancement of capabilities.

However, there’s two fundamental problems:

To meet the infrastructure demand to scale performance, (excluding the entire topic of energy generation and distribution), we need to mine more copper in the next 5 years than we’ve mined in the previous 10,000 to build the moderate estimate of data centers required. No, I’m not kidding. (Do yourself a favor and watch):

To scale capabilities, you need novel data sources worth consuming for training purposes at the same level of growth as the models themselves.

LLMs are not intelligent. Generative AI is named that way because these models are rooted in statistical analyses based on prior guesses and feedback on whether those guesses were correct or not.

LLMs know nothing, they guess what the statistically most likely next best word is based on what they are tasked with doing is. But what is occurring now because of the focus on consumer adoption over everything else is causing a statistical death sentence for the “scale at all costs” hypothesis.

In ancient mythology, the Ouroboros—the serpent devouring its own tail—represents eternal recurrence. In the case of Generative AI, we now call this Model Autophagy Disorder which is defined as a degradation in effectiveness of models over generations due to training on content and data they’ve previously generated.

Consumer-grade content (i.e. “AI slop”) has flooded into every aspect of almost every system in the world, and in doing so has been poisoning the very sources of data these models depend on to learn and scale their capabilities.

The more “slop” people produce with AI, the less effective the future states of these models will become.

This is not conjecture - this is an inevitability.

The Great Data Depletion

For over a decade, AI progress has been fueled by a “Golden Record” of human thought—the pre-2023 internet and written materials. It was/is an insane, chaotic, high-entropy, high-volume, but authentic archive of human creativity, imperfection, deplorability, humor, horror, stupidity, and knowledge. But it is becoming increasingly clear that the stock of high-quality, human-generated text is quickly being overtaken by synthetic, AI generated data.

The second a model starts to train on another model’s output, it doesn’t just learn “facts.” It unknowingly absorbs the statistical biases and subtle hallucinations of the model that generated the output. This triggers a degenerative effect known as Model Collapse:

Statistical Thinning: Models gravitate toward the “most probable” word. When they train on their own guesses, the “long-tail” of human nuance—rare metaphors and creative exceptions—is the first thing to be deleted.

The Inbreeding Effect: Just as biological inbreeding amplifies genetic defects, recursive training amplifies “artifacts.” A slight bias in GPT-4 becomes a hard-coded “truth” in GPT-5. This is why GPT-5 failed so spectacularly.

Variance Decay: The model’s “worldview” shrinks. It becomes incredibly confident about a narrowing slice of reality, eventually producing an “inbred mutant” of language—grammatically perfect but intellectually hollow.

All of this boils down to one very simple statement - be hyped about the possibilities AI promises, but be extremely skeptical of how those promises are being achieved.

This risk is very, very real, and so even if you’re “not a technical person” you have got to understand just how these models actually operate. We cannot, and should not just blindly accept and implement these capabilities into our products. Many critics of AI say things like “it’s just fancy autocorrect” - which it sort of is, but that also minimizes the kinds of impacts not closely monitoring the underlying models could present in the real world.

The Regulated Reality: MedTech’s New Baseline

The 2025/2026 FDA draft guidance on AI lifecycle management makes one thing clear:

For those of us in regulated industries, “Model Collapse” translates directly to “Diagnostic Failure.”

What can we do about it?

Enforce GxP for Data Pipelines: Treat your training data with the same rigor as raw materials in a manufacturing plant. This means implementing Clause 4.1.6 (Validation of Software) for your data scrapers and Clause 4.2.2 (Control of Documents) for your data lineages.

Synthetic vs. Real Benchmarking: Never validate a model solely on synthetic data. You must maintain a “Protected Human Archive”—a gold-standard dataset of verified clinical cases used only for final validation.

Establish an AI Governance Board: Move AI out of the “R&D sandbox” and into the QMS. Every retrained model must go through a formal Impact Assessment to ensure that recursive training hasn’t introduced bias or degraded accuracy for sub-populations.

Focus on Data Provenance* and AI Observability ** over almost everything else!

Data Provenance*

In this “poisoned well” environment, Data Provenance is no longer a “nice-to-have” metadata field; it is the fundamental infrastructure for AI to remain effective.

Data Provenance is the documented trail of a piece of data’s origin, creation, and transformation. In 2026, it serves three critical roles:

Contamination Filtering: Actively identifying and stripping out “synthetic” markers from training sets.

Verification & Attribution: Ensuring that training data comes from verified human sources (e.g., licensed archives, peer-reviewed journals) rather than the trash heap of AI slop found on the open web.

Auditability: In regulated sectors like MedTech or Finance, you must be able to prove that a model’s decision-making isn’t based on a recursive hallucination.

Why AI Observability is Key**

Traditional monitoring tells you if a server is up; AI Observability tells you if the model is losing its mind.

In the age of the Ouroboros problem, observability is the only way to detect the early signs of Model Collapse:

Drift Detection: Spotting when a model’s output distribution starts to shrink or diverge from the original human “ground truth.”

Entropy Monitoring: Tracking the “predictability” of a model. If a model becomes too predictable, it’s a sign that it is regressing to the mean due to recursive training.

Anomaly Attribution: Tracing down a hallucination back to a specific poisoned data in a training set is no longer optional.

The “Ouroboros Problem” shouldn’t frighten us away from AI; it should focus our efforts.

We should embrace AI for what it is—a world-class engine for efficiency, pattern recognition, and administrative relief. In use cases like medical coding, literature summarization, or initial drafting of regulatory documentation, AI is an unparalleled force multiplier. It saves thousands of human hours and allows experts to focus on the high-level decision-making only they can provide.

However, as we scale these tools, we must understand the real risks inherent in the architecture. When we move from administrative support to clinical decision-making or autonomous design, the safety of the system depends entirely on the “purity” of the data and the rigor of our observability.

The goal isn’t to stop the snake from moving—it’s to ensure it’s moving forward toward innovation, rather than turning inward toward collapse. By prioritizing data provenance and robust governance, we can harness the power of AI without being consumed by its echoes.